Visual Search: Future Growth and Opportunities

What is visual search? What are the top visual search platforms? How will visual search affect text-based searches? We explore it all in this article.

It’s no secret, typing is inefficient.

When you see a stranger rocking a pair of sneakers you like, traditional text-based searches require you to awkwardly ask them what they are wearing.

Then you have to type what they tell you into Google and if you don’t misspell any words you might find the shoes you need.

Seems like a lot of effort just to find out what shoe someone is wearing.

Now imagine if you could just take a picture of those shoes and have the results automatically come up in your phone.

This is visual search doing its magic.

And it’s the reason that some of the biggest companies are pouring massive amounts of money to develop this search technology.

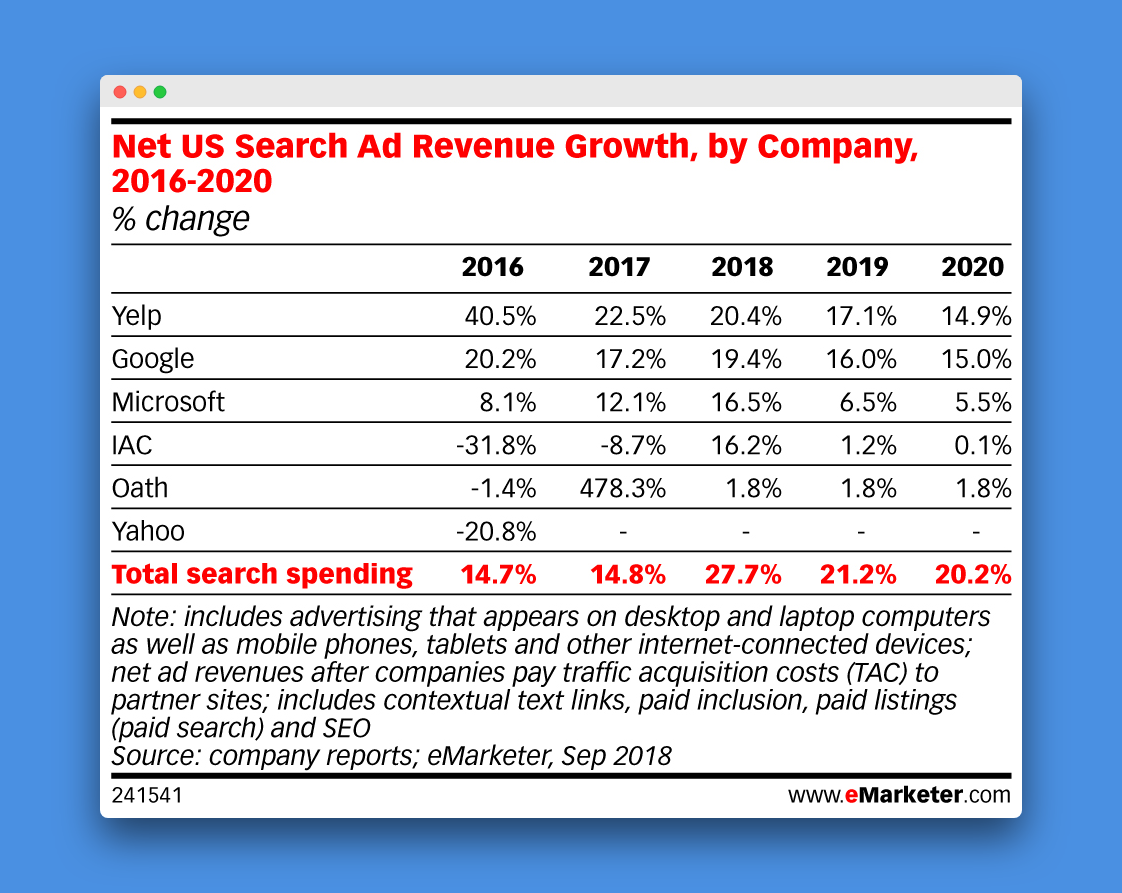

Because like it or not, text search is dying. Here is a graph from eMarketer that projects the growth rates for search ad revenue until 2020. They are headed downhill.

What kinds of searches are going up?

Video, Voice and Visual. The Three V’s.

But while there is a ton of focus on voice and video, visual search is not getting as much attention.

In this article we are going to delve deep into the numbers and see how fast visual search is growing, who the biggest players are, and how marketers can tap into this growing field.

But let’s first begin with a definition.

What is visual search?

Visual search uses images as the query for searches as opposed to text. This is different to searches on Google Images where text is still used to find the best match with an image.

Visual search turns the phone on your camera into a search engine. You can just point to any object you like, take a picture, and receive search results based on the item.

That is pretty powerful technology. And there are a host of different fields where this technology could be applied.

What industries could visual search be used for?

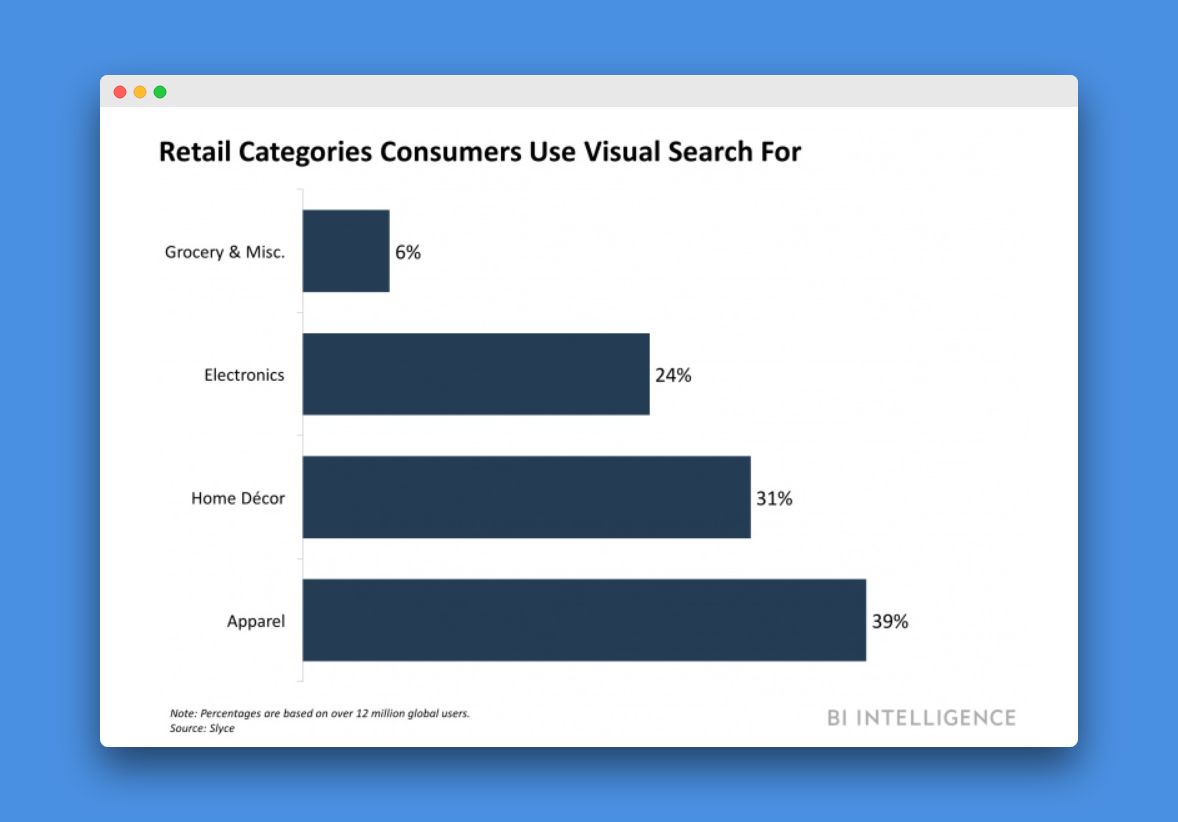

The most obvious sector is ecommerce and retail. The clothes people wear become walking advertisements. A watch, a pair of shoes, furniture in a building, you just snap away and get search results identifying the item and sales offers.

Another area visual search could be applied is the travel industry. Imagine walking down the streets of Rome and not needing a tour guide anymore. With visual search you just point your camera at a building and instantly get all the information you need about when it was built, who built it, what it is used for today and so on.

Source: Business Insider

Visual search clearly has a lot of potential. So then why are we not hearing more about it?

Well, part of the reason is that it still accounts for a small amount of overall searches.

Estimates put the number of visual searches at perhaps 1 billion per month. This is a lot, but it pales in comparison to the several billion text searches initiated.

However, this hasn’t stopped major companies from delving into the technology. In fact, there is an active war going on by the biggest brands to become your visual search app of choice.

What are the top visual search platforms?

Pinterest Lens

Pinterest Lens launched in February 2017 and uses the camera connected to your Pinterest app to take pictures of objects and then show similar pins to what you take a picture of.

In February 2018 Pinterest reported that there were 600 million visual searches taking place on its app every month, a 140% increase year-over-year.

According to Pinterest, the most popular categories in its Lens searches include:

- Fashion

- Home decor

- Art

- Food

- Products

- Animals

- Outfits

- Beauty

- Vehicles

- Travel

And the most popular trending searches include:

- Tattoos

- Nails

- Sunglasses

- Jeans

- Cats

- Wedding Dress

- Plants

- Quilts

- Brownies

- Natural hair

Google Lens

On October 4th, 2017 Google Lens was launched, but it only gave people access to a general visual search engine. A recent update in May 2018 embedded Google Lens in the camera, Google Assistant and Google Photos apps of android devices.

On October 25th, 2018, Google said it was releasing Lens within Google Images. The new feature allows users to click on an object within a photo on Google Images and then similar products or pages based on the image will get pulled up.

For instance, if you see a photo of a kitchen and you like the table in it, you can click on the table in the photo and Google will pull up links to similar tables where you can buy the item.

Instagram Shopping

Instagram is also developing a visual search platform. In a recent blog post on September 17, 2018, Instagram announced plans to launch a new shopping feature that would incorporate elements of visual search.

With over 400 million Instagram users that view stories everyday, the new feature lets people click on a product that appears in an Instagram Story while they are watching it. They are then given a link to either buy the product or learn more about it.

In this way, Instagram hopes to penetrate the online shopping market. Rumors have been circulating since this summer that Instagram plans to launch a standalone IG Shopping App which will focus solely on using images and stories for shopping.

Amazon Rekognition

Rather than develop its own visual search engine, Amazon has made its visual search technology available to third parties for use. It is called the rekognition platform and it lets other companies work together with Amazon to link images to products that are on sale in Amazon.

Amazon Rekognition allows third parties to add image and video analysis to their applications. The third parties provide the image or video to Amazon and the Rekognition API then identifies the objects, people or text in the material provided.

The features include facial recognition similar to that used by Facebook to suggest friends to tag in pictures, as well as the ability to identify celebrities, the type of activity or scene captured in a photo and more.

Snapchat

On September 24, 2018 Snapchat announced that it was introducing a visual search option in its app. Instead of developing its own technology, Snapchat has decided to partner with Amazon and use their Rekognition API as the basis of their visual search feature. It will be rolled out slowly over the rest of 2018 and into 2019.

The feature will allow users to take a photo of an item and immediately be transferred to Amazon's app or website with a link to the product they took a picture of to complete the purchase.

eBay

In the summer of 2017 eBay announced it would launch a visual search tool allowing users to use images to better shop for products on its platform. In this way, the company is hoping to enter the visual search game and better compete with the likes of Pinterest, Amazon and Google.

eBay offers two visual search options on its platform. The first is Image Search, which lets users take a photo in real life from their smartphone camera and then the app will pull up similar goods on eBay related to the picture they took.

The second feature is Find It On Ebay, which allows users to take a photo they find on the web and submit it to eBay, which will then use its visual search technology to find similar products related to the image.

Bing Visual Search

On June 21st, 2018 Bing Visual Search launched which gave users the ability to search for, shop and find information about the world around them through the photos they take on their phone.

For example, Bing’s visual search engine allows users to take a photo of a flower they like and Bing’s app will identify the type and provide you with links to find out more information, like a Wikipedia page.

The Bing app also lets you shop for goods. If your friend is wearing a jacket you like, simply take a picture or upload the picture of your friend’s jacket and Bing will then return pictures, prices and similar jackets.

Language Services

Visual Search offers enormous opportunities when it comes to reducing language barriers. With Google Lens, a user can simply point their camera at a sign in a foreign language and it will be translated automatically on their phone. Visual Search can be extremely useful in foreign markets as a result, especially in countries that use different alphabets, like Russia, the Arab World or China and Japan.

QR Codes

There are around 70 million smartphone barcode scanners in the US alone. Visual Search platforms by major players like Snapchat, Google and Pinterest have features that allow them to scan QR codes. QR codes serve to link physical objects with online content. Pinterest launched pincodes in 2017 and many retailers have utilized pincodes in their stores, such as The Home Depot and Nordstrom

The problems with Visual Search

1. Technical complications

Visual search clearly has its advantages over text-based search. It’s simply much easier to take a picture of an item, which requires only one click, as opposed to typing out a request on a search engine.

The problem is that, from a technical standpoint, visual search is quite complicated. It requires complex AI programs and enormous amounts of data to be rendered effective. As more and more people begin using images for visual searches, AI is bound to improve, but so far it has been a slow process.

2. Unclear Intent

Another problem with visual search lies with the intent of a user who took a picture. A photo of a labeled product in a grocery store could be a request to see similar food products, or it could be a request for a translation. The user's intent when taking a picture is not immediately clear with visual search, the way it is with text.

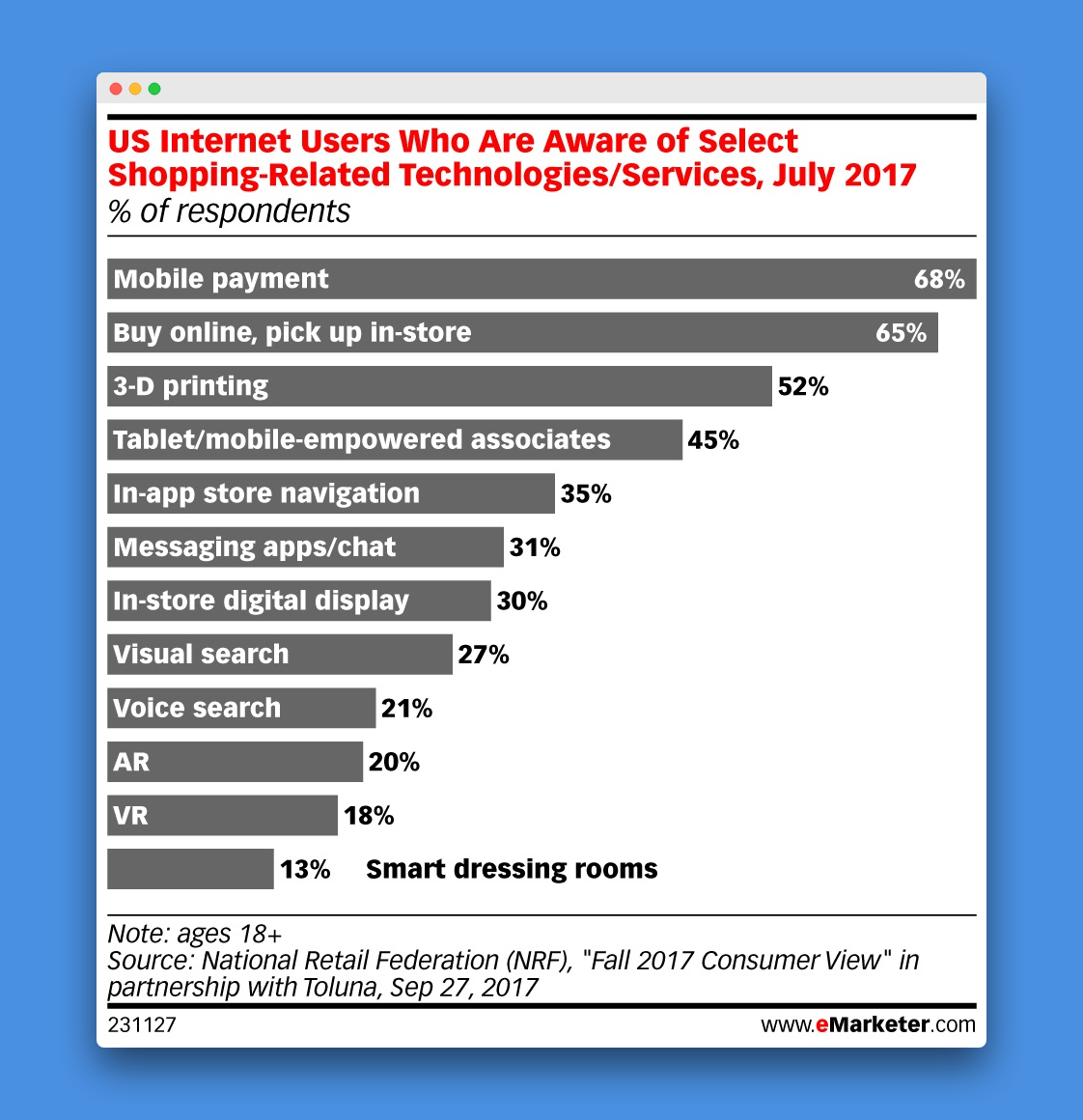

3. Low usage

There is also the ongoing problem of low usage. Despite its convenience, the number of visual searches pales in comparison to those of text-based searches. In fact, a study in 2017 showed that only 27% of internet users were aware of the technology.

When is Visual Search most effective?

Visual Search is most effective when it answers questions that are hard to put into words. This is most commonly the case when you don’t know the name of a product.

Here are some examples.

In a grocery store you see a type of produce you like, but don’t know the exact name. Simply taking a photo of the produce is much more convenient than typing out “what type of squash is this?”

Visiting a new city as a tourist, a historic building peaks your interest. A text-based search like “what building is this?” does not work, whereas a picture of the building does.

Someone walks into a restaurant and sits next to you wearing a pair of shoes you like. A picture pulls up the exact brand, whereas a text-based search like “what shoes are these?” does not suffice.

Below is a sample of some questions that visual search is better at answering compared to text search.

What is this?

How can I replace this part?

What goes with this?

What does this mean?

What is this called?

What’s wrong with?

How can marketers take advantage of Visual Search?

Visual search is in the early stages of growth, and currently no widespread advertising options have been developed for visual searches. But as visual search becomes more popular, advertising opportunities are likely to become more available.

Some tactics marketers could deploy now to take advantage of visual search include:

Integrating with chatbots: Retailers can have a chatbot initiate a conversation based on a picture a user takes. This partially solves the problem of intent. Let’s say you take a picture of a foreign-labeled food product. A chatbot then comes up asking whether you would like a translation of the product or to find similar products.

Build an image library of products: Retailers should make sure to have an image that accompanies all products they sell, so that they can be picked up by visual search engines in the future.

Combining visual search with text: Rather than view visual search as competing with text search, marketers should find ways to integrate the two together.

Increase presence on image-centric social media: Google, Pinterest, Instagram and Snapchat are leading the way in terms of integrating visual search, so organizing photos on these platforms in a structured manner will help them become more readily identifiable by visual search engines.

Easily identify items in pictures: Retailers should make it easy for different items in images to be identified. This will make it easy for users to click on objects or items in photos they want to learn more about it, the way Bing and Google Lens have started to do.

Make product information easily available: Information about the price and availability of a product that gets pulled up by a visual search should be easily available and clear to read for users.

Follow traditional image SEO practices: Of course, marketers should continue to follow traditional SEO practices for images such as adding meta data and alternative text that describes the image as accurately as possible.

Does this mean text-based search is dead?

Of course not.

Despite the attractiveness of visual search, it suffers from the same problems as voice and video searches.

The technology is not yet there, and until massive advances are made in AI, users will likely continue to prefer text over visual searches.

This means that text-based searches will continue to remain the bread-and-butter for most PPC marketers in the near term.

At the same time, adopting a long-term view of where search technology is headed never hurts. While visual search is in the early stages of development, marketers that position themselves now to capitalize on the growth of visual search in the future could see major payoffs down the road.

Try Aori

Want to prepare yourself for the rise of visual search? Check out our smart advertising solutions to see how they can help you manage your campaigns in a visual-first world.